(If it all becomes a blur, feel free to skip to the end of my history lesson)

>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>>><<<<<<<<<<<<<<<<<<<<<<<<<<<<<<

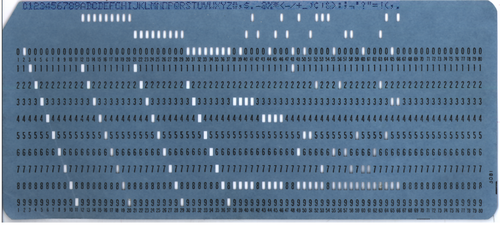

In the early days of computing, there were punchcards. A series (sometimes thousands) of cards with punched rows would be fed into a machine that blinked and hummed, which would eventually output the desired effect. This was first implemented in a digital computing environment in 1938 by German Konrad Zuse in his "Zuse" m

achine. Previous to this computers were 'analog' machines, meaning they used electricity in handmade circuits to create an analogue, or similarity, to real-life phenomenon such as bomb tragectories, or water levels. A few years after Zuse, the British developed 'Colossus', a card-input device which was electronically programmable, meaning it could be used for multiple purposes such as code-breaking.

achine. Previous to this computers were 'analog' machines, meaning they used electricity in handmade circuits to create an analogue, or similarity, to real-life phenomenon such as bomb tragectories, or water levels. A few years after Zuse, the British developed 'Colossus', a card-input device which was electronically programmable, meaning it could be used for multiple purposes such as code-breaking.As computers became more sophisticated, the invention of the transistor allowed for smaller, cheaper computers to be built, and so new means of interfacing with the technology became necessary. AT&T, which ran the Bell telephone service in the United States created a research group to develop a new means of creating a communicative and malleable system by which multiple users could access a centralized processing computer. What eventually developed in 1969 was the first rendition of UNIX, a computing environment where users could run applications from either punched cards or magnetic tape and received the output of those functions on their terminal. AT&T wanted to market this to the educational community, as well as the Defense department, but could not market any product in the 'computer industry' as they were prevented by an Anti-trust and monopoly suit launched against them in 1959 because of their monopoly on telecommunications in the United States (It is interesting to note that AT&T ran the Bell telephone system, which was the parent company of the Bell Canada company currently vying for the right to Usage-based-billing for internet in Canada. In 1880, the Canadian government granted Bell Canada exclusive monopoly over Canadian long-distance telephone communication).

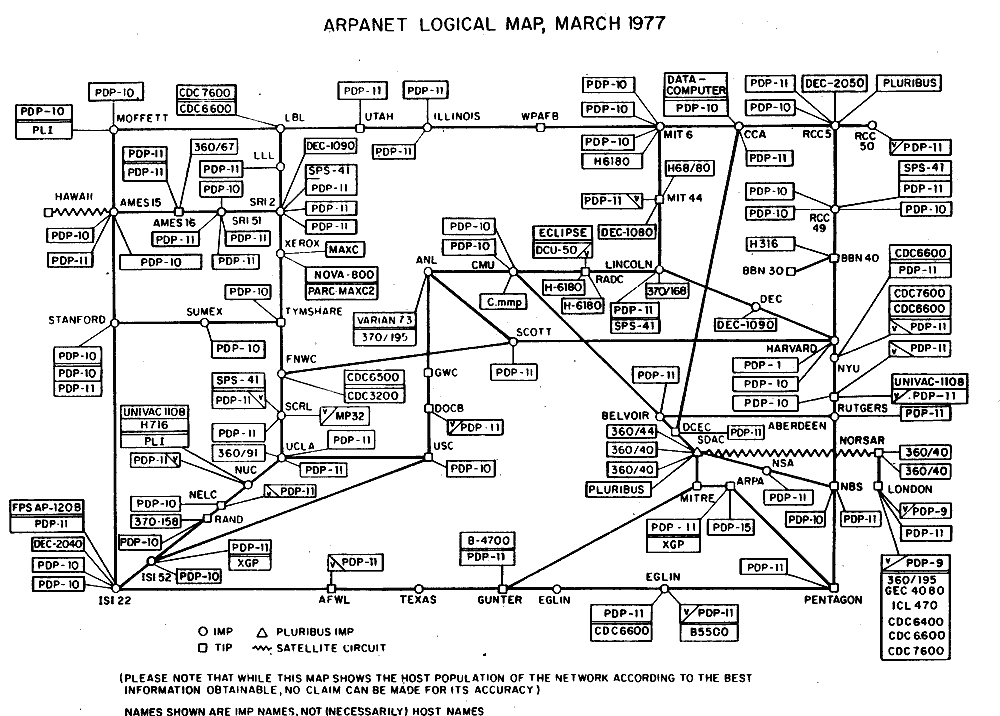

At the time they were built, Unix systems were run in local networks, but within a year the ARPANET (Advanced Research Projects Agency Network) was founded, and in 1969 there were 110 interested parties desiring connections to the ARPANET. The ARPANET was initiated as a research and development forum for the sharing of ideas between laboratories, the initial proposals by J.C.R Lickleider in 1962 were designed as an "Intergalactic Computer Network" and contained many of the foundations for the Internet. As this form of communication became more efficient, and the ability for user input within computing operations became possible, there was a need for an effective means of providing user input to the computer technology. This took the form of the keyboard, which began its life in 197

0.

0.

Interestingly enough, the computer mouse actually predates the use of electronic keyboards as an interactive computing device (of course ignoring the early analog keyboards that manipulated mechanical calculators). Developed in 1962, it was developed by Douglas Engelbart and Bill English at Stanford for use in their own text-based network system. In fact, they experimented with other forms of manipulation such as motion detection by cameras, and several head mounted devices, but settled for the mouse because of its ease of use.

Despite many famil

iar sounding terms, none of these applications would seem familiar to the modern user of most standard computing schemes today. The true leap towards the modern computing environment began in 1980 with the introduction of the Xerox Star.

iar sounding terms, none of these applications would seem familiar to the modern user of most standard computing schemes today. The true leap towards the modern computing environment began in 1980 with the introduction of the Xerox Star.

This Prometheus of the Personal Computer sold for $20,000 USD, and sported 384

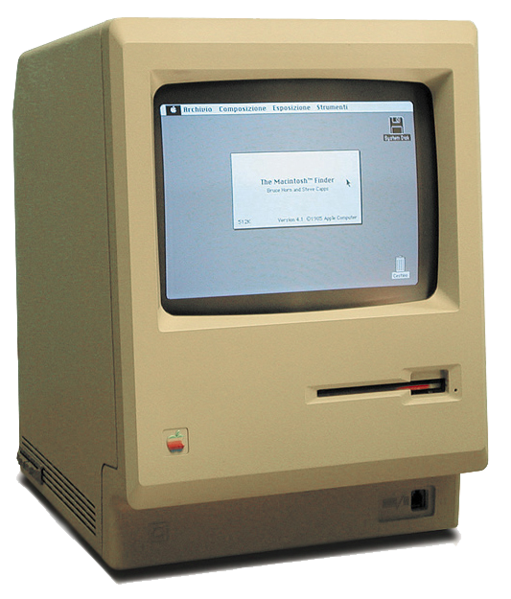

This Prometheus of the Personal Computer sold for $20,000 USD, and sported 384 kB of ram, and a 10 MB HDD, utilizing a 4-bit AMD processor. The key to this development was that it was the first system to utlize a GUI (Graphical User Interface). Now users could not only interact in real-time by inputting text, but could use a mouse to graphically manipulate objects onscreen. Despite this quantum leap, the Star did not catch on

kB of ram, and a 10 MB HDD, utilizing a 4-bit AMD processor. The key to this development was that it was the first system to utlize a GUI (Graphical User Interface). Now users could not only interact in real-time by inputting text, but could use a mouse to graphically manipulate objects onscreen. Despite this quantum leap, the Star did not catch on  due to its hefty pricetag, and within two years, Apple Inc. had created the 'LISA', utilizing a GUI and sporting a 5 Mhz processor and 1Mb of RAM. Using the Lisa Office System, it paved the way for the Macintosh 128k, an affordable $2,500 Personal Computer which would hold the launch of Macintosh System Software (Mac OS) 1.1, the first globally popular Graphical User Interface.

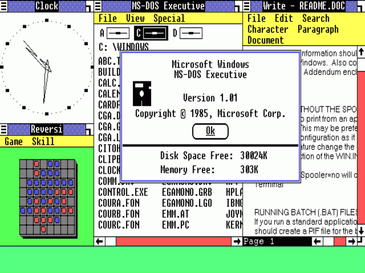

due to its hefty pricetag, and within two years, Apple Inc. had created the 'LISA', utilizing a GUI and sporting a 5 Mhz processor and 1Mb of RAM. Using the Lisa Office System, it paved the way for the Macintosh 128k, an affordable $2,500 Personal Computer which would hold the launch of Macintosh System Software (Mac OS) 1.1, the first globally popular Graphical User Interface.Soon after this, IBM arrived with a more economical version of the Personal computer. Through updated patent laws, IBM was not able to prevent companies f

rom cloning their software, and so the PC was born. Generic companies created cheap systems running Microsoft's MS-DOS, a non-graphical command-line interface. In 1981 Microsoft developed "Interface Manager", a GUI complement to MS-DOS, but due to issues did not get released until 1995 when it was rebranded "Windows 1.0". Thus began the three decades battle between Apple and Microsoft for domination of the proprietary Operating System market.

rom cloning their software, and so the PC was born. Generic companies created cheap systems running Microsoft's MS-DOS, a non-graphical command-line interface. In 1981 Microsoft developed "Interface Manager", a GUI complement to MS-DOS, but due to issues did not get released until 1995 when it was rebranded "Windows 1.0". Thus began the three decades battle between Apple and Microsoft for domination of the proprietary Operating System market.>>>>>>>>>>>>>>>>> If you stopped reading above, continue here<<<<<<<<<<<<<<<<<<<

So what are the implications of everything stated above? Realistically, computer hardware has advanced to the point that CPUs are thousands of times faster, HDDs hold millions of times

more data, and for $50 you can get graphics processing units not even available for millions of

dollars twenty years ago. Yet, despite these vast changes, in the 3 decades since GUIs have

been common applications of operating systems, we have seen little change in the way we

operate and interact with computers.

Mobile computing is no different. Despite a touch screen environment, and highly portable

application, they are still limited to 1 user per device, and create an isolated environment in

which data and applications can be interacted with. In essence, they are no different from any

traditional computing application, only more portable.

UUI (Universal User Interfacing) is truly the future technology application in the classroom.

Already in implementation in technology such as the Smartboard, UUI presents computer input

and output in the same digital and physical space, making the classroom the computer. It allows

multiple users to share, collaborate, communicate, and create simultaneously, and does not isolate

users to single terminals. It also removes the separation between the abstract digital world, and

the concrete physical world, fusing them into one interactive environment where content can be

acquired through waving a hand, or asking a question. Applications can be run simultaneously

with real-world activities, and creative collaboration is fully available and able to be realized.

As UUI technology becomes a standard, and our current concepts of input and output go by the

wayside, so will mobile computing and our preoccupation with using it in a classroom context.

Interactive fusion of the digital and the physical is the way we need to go as educators to truly

integrate technology into the classroom.

If you haven't been able to follow anything I am saying, or would like to see an interesting video

on UUI, check out the following TED Talk:

No comments:

Post a Comment